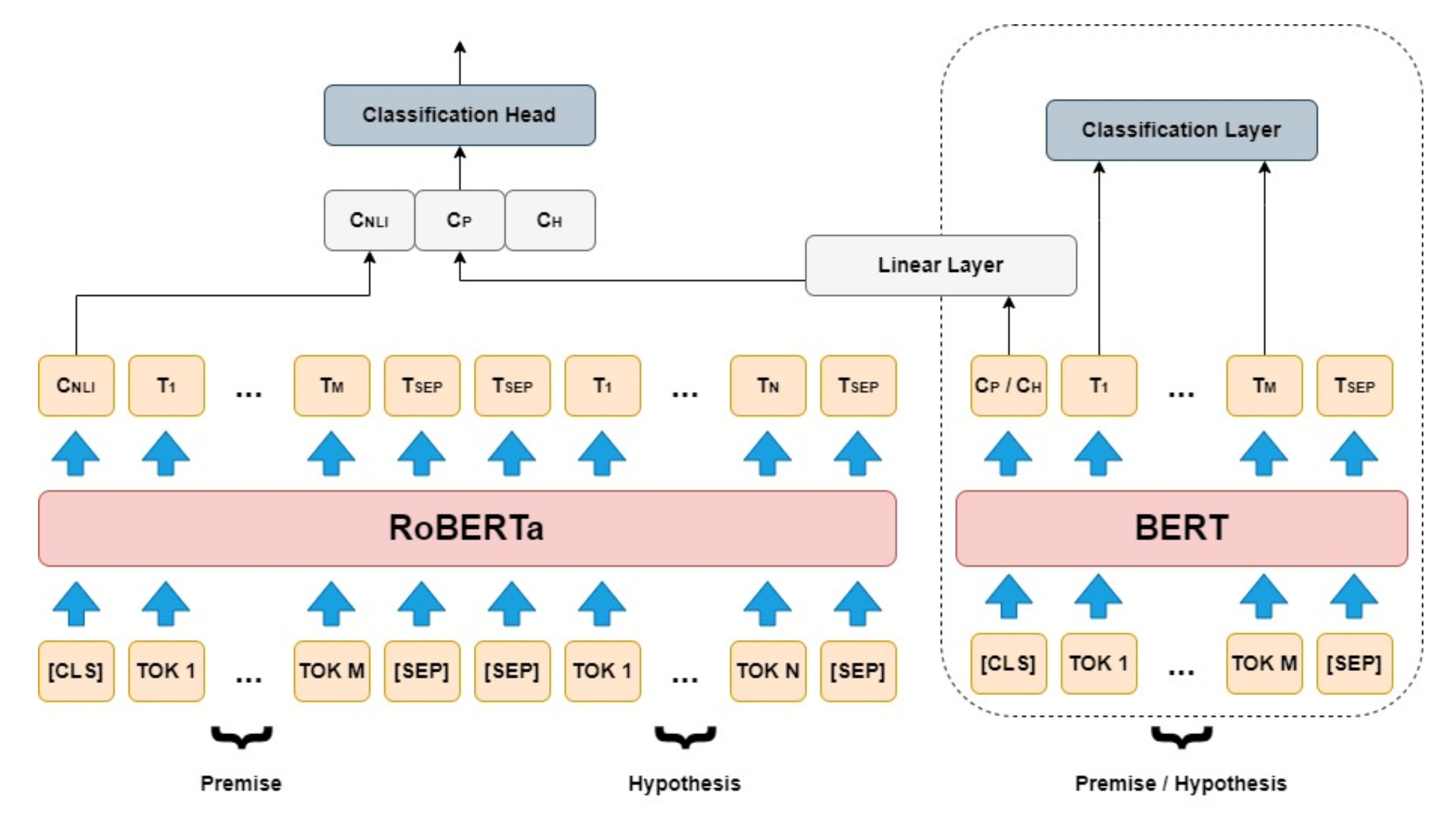

![PDF] Contextualized Embeddings based Transformer Encoder for Sentence Similarity Modeling in Answer Selection Task | Semantic Scholar PDF] Contextualized Embeddings based Transformer Encoder for Sentence Similarity Modeling in Answer Selection Task | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/3bb54a4663da3ab3b5766c61fb9025348bce2182/3-Figure1-1.png)

PDF] Contextualized Embeddings based Transformer Encoder for Sentence Similarity Modeling in Answer Selection Task | Semantic Scholar

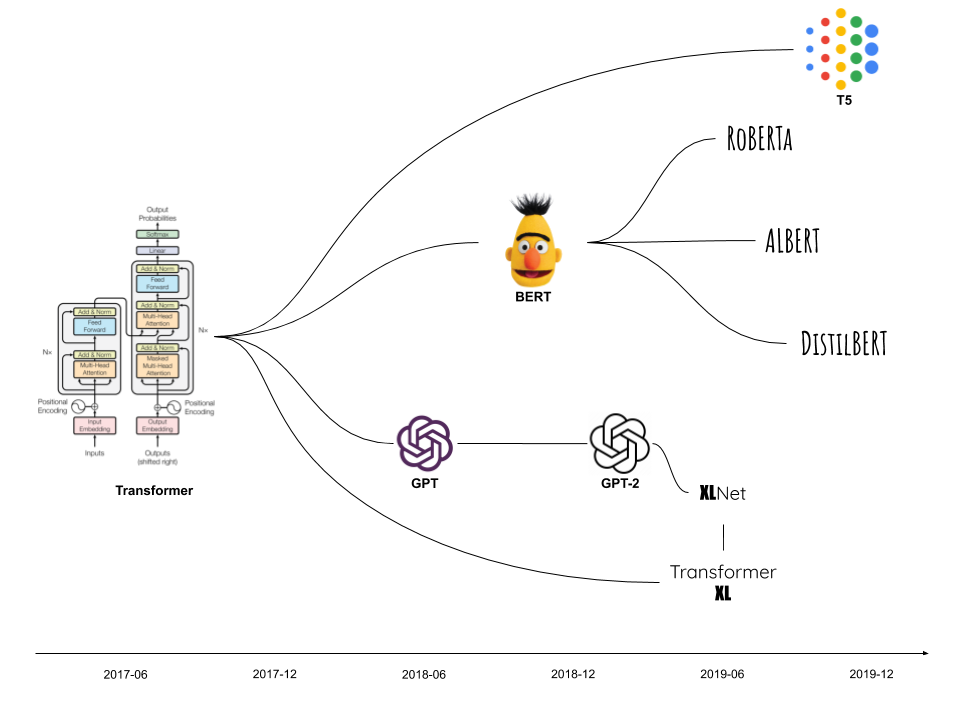

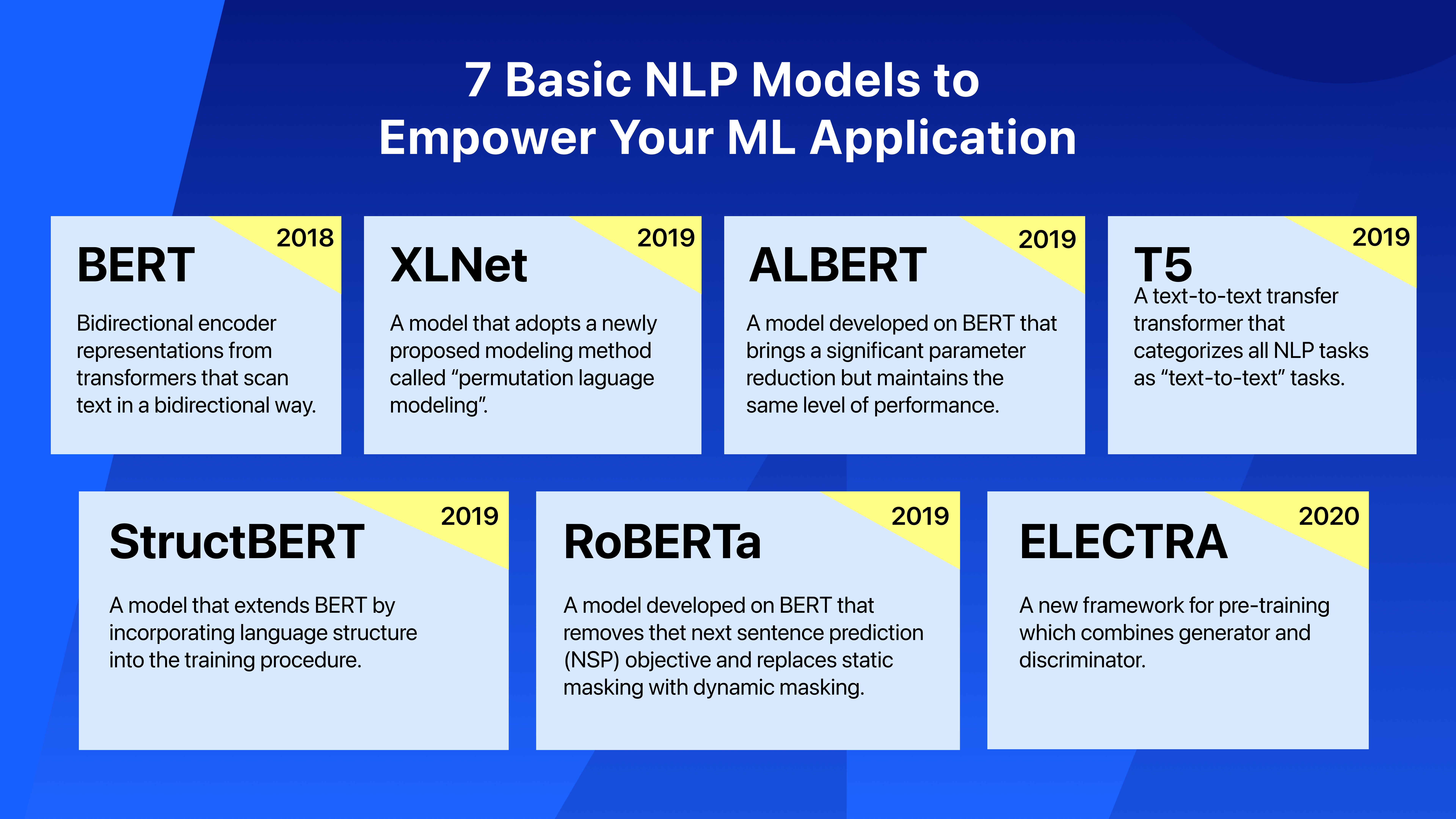

Modeling Natural Language with Transformers: Bert, RoBERTa and XLNet. – Cloud Computing For Science and Engineering

Adding RoBERTa NLP to the ONNX model zoo for natural language predictions - Microsoft Open Source Blog

BDCC | Free Full-Text | RoBERTaEns: Deep Bidirectional Encoder Ensemble Model for Fact Verification | HTML

Transformers for Natural Language Processing: Build innovative deep neural network architectures for NLP with Python, PyTorch, TensorFlow, BERT, RoBERTa, and more: Rothman, Denis: 9781800565791: Amazon.com: Books

LAMBERT model architecture. Differences with the plain RoBERTa model... | Download Scientific Diagram

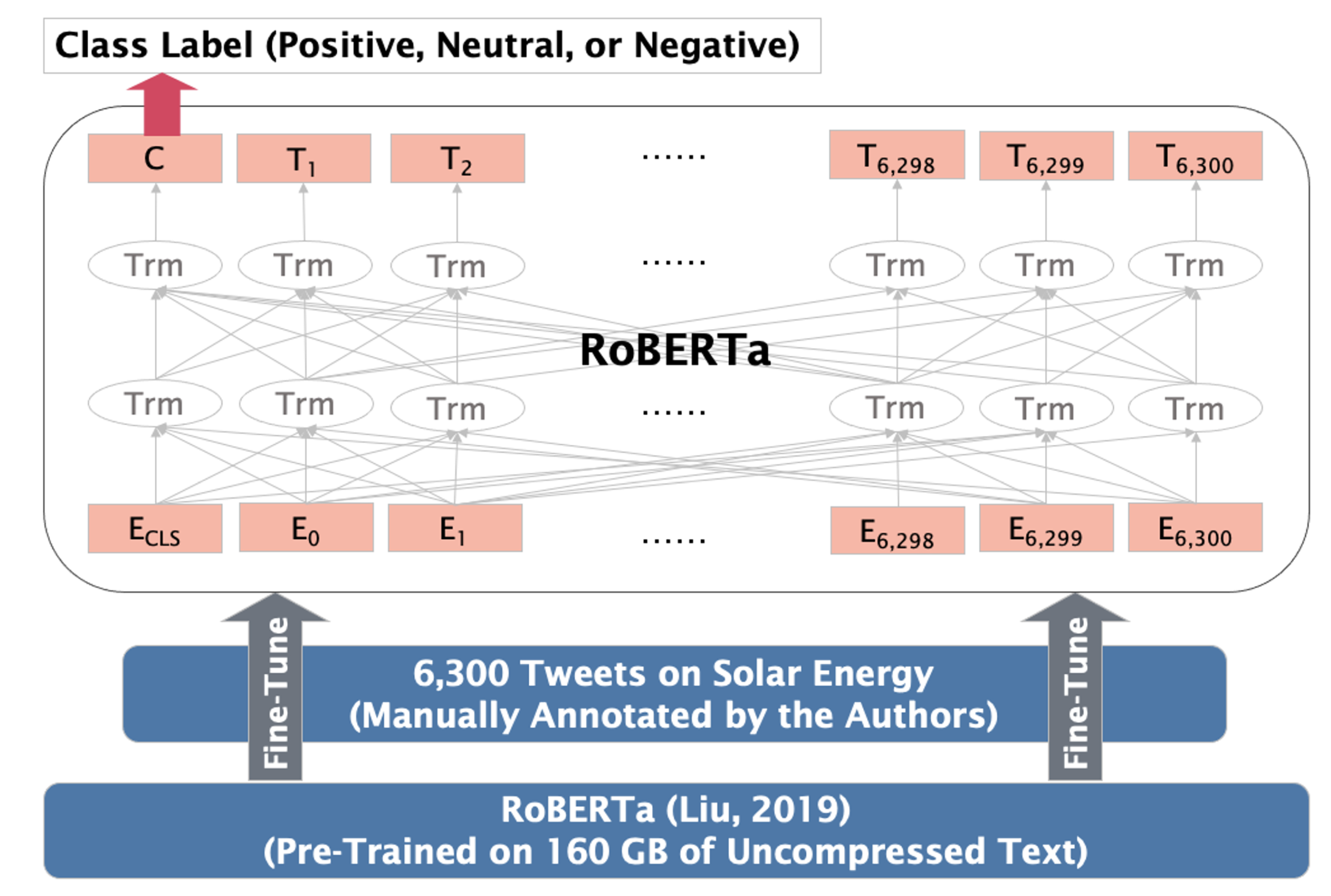

Sustainability | Free Full-Text | Public Sentiment toward Solar Energy—Opinion Mining of Twitter Using a Transformer-Based Language Model | HTML

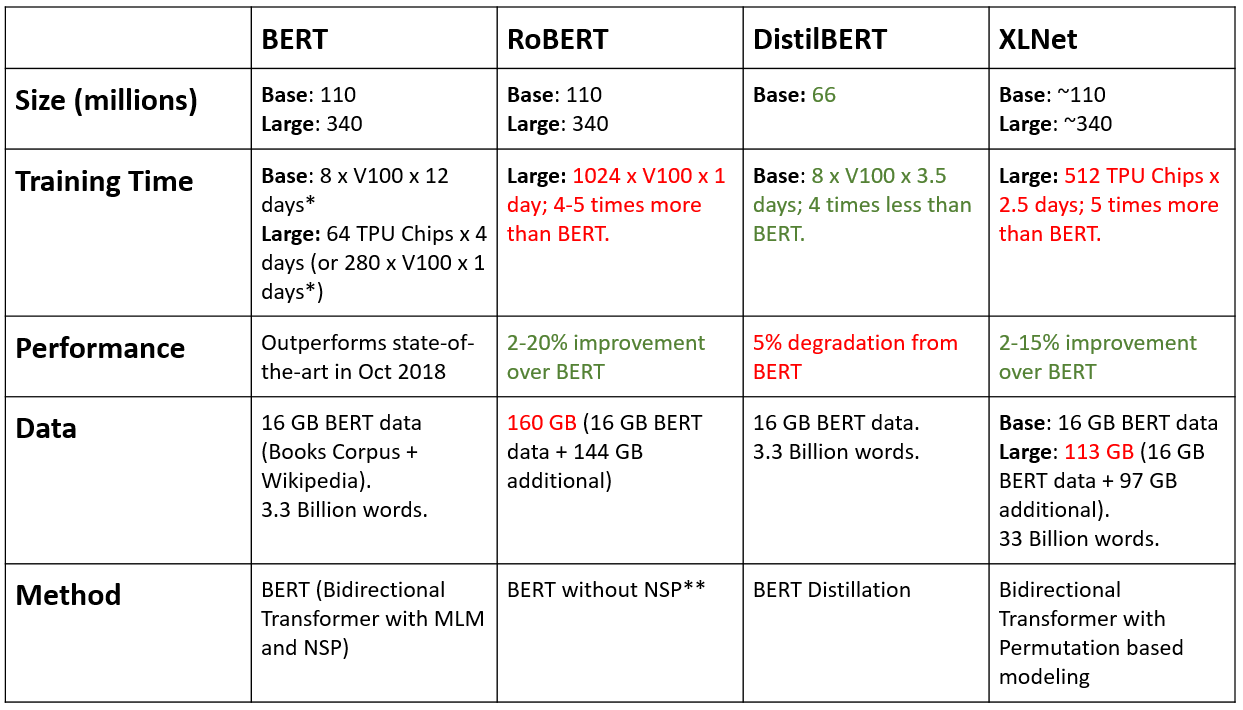

RoBERTa — Robustly optimized BERT approach: Better than XLNet without Architectural Changes to the Original BERT - KiKaBeN

Transformers | Fine-tuning RoBERTa with PyTorch | by Peggy Chang | Towards Data Science | Towards Data Science

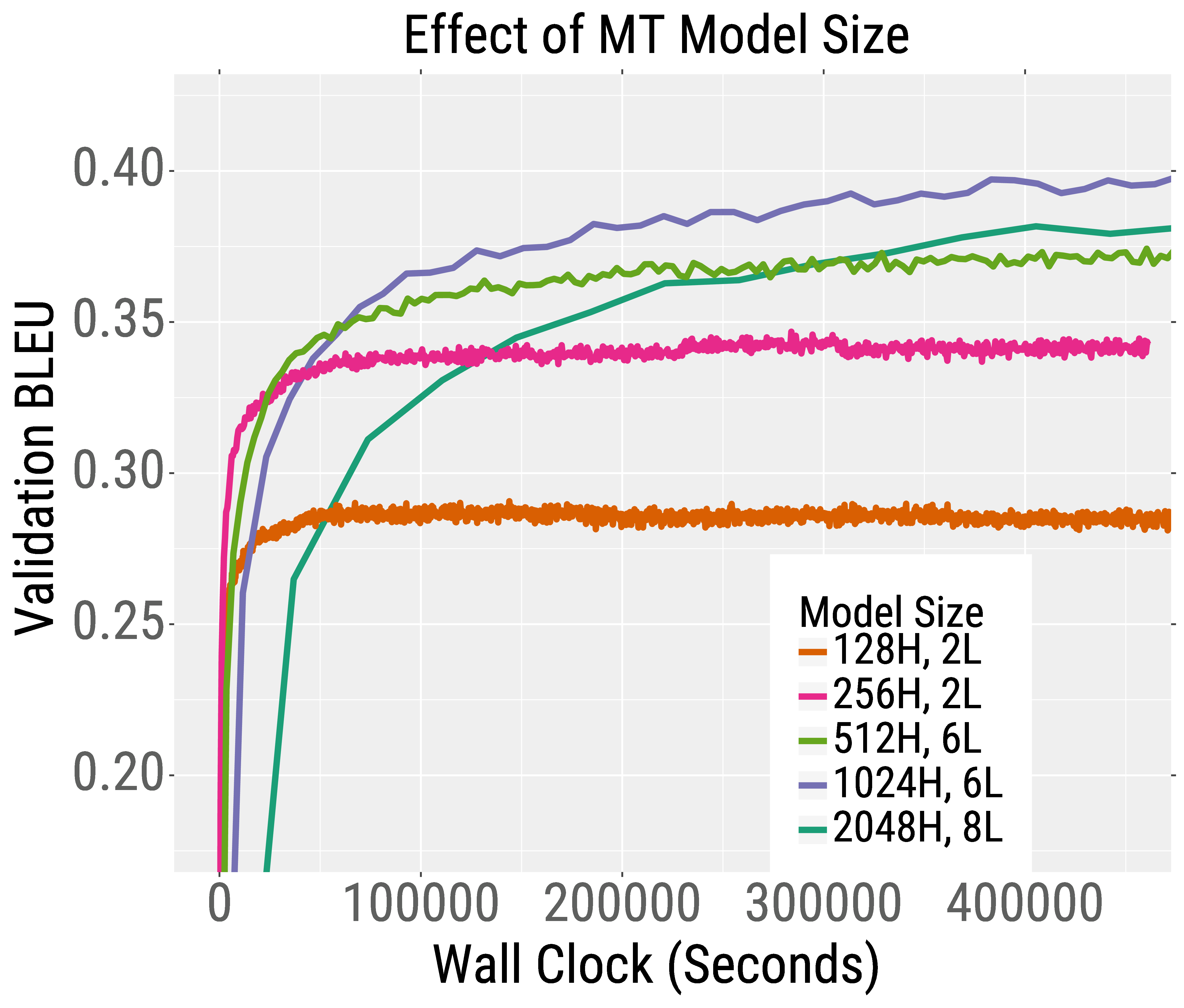

Speeding Up Transformer Training and Inference By Increasing Model Size – The Berkeley Artificial Intelligence Research Blog

SimpleRepresentations: BERT, RoBERTa, XLM, XLNet and DistilBERT Features for Any NLP Task | by Ali Hamdi Ali Fadel | The Startup | Medium